All you need to know about Photogrammetry

Photogrammetry is the science of making measurements from photographs, to be concise.

The input to photogrammetry is photographs, and the output is typically a map, a drawing, a measurement, or a 3D model of some real-world object or scene. Many of the maps we use today are created with photogrammetry and photographs taken from aircraft.

Digital photogrammetry operates on images of objects captured from different locations and angles using a standard digital camera, and having the computer detect overlapping patterns to build up a 3D reconstruction of the photographed model.

Methods

Photogrammetry uses methods from many disciplines, including optics and projective geometry. Digital image capturing and photogrammetric processing includes several well defined stages, which allow the generation of 2D or 3D digital models of the object as an end product.

The 3D coordinates define the locations of object points in the 3D space. The image coordinates define the locations of the object points’ images on the film or an electronic imaging device. The exterior orientation of a camera defines its location in space and its view direction. The inner orientation defines the geometric parameters of the imaging process. This is primarily the focal length of the lens, but can also include the description of lens distortions. Further additional observations play an important role: With scale bars, basically a known distance of two points in space, or known fix points, the connection to the basic measuring units is created. Each of the four main variables can be an input or an output of a photogrammetric method.

Algorithms for photogrammetry typically attempt to minimize the sum of the squares of errors over the coordinates and relative displacements of the reference points. This minimization is known as bundle adjustment and is often performed using the Levenberg–Marquardt algorithm.

Stereophotogrammetry

Stereophotogrammetry involves estimating the three-dimensional coordinates of points on an object employing measurements made in two or more photographic images taken from different positions (see stereoscopy). Common points are identified on each image. A line of sight can be constructed from the camera location to the point on the object. It is the intersection of these rays that determines the three-dimensional location of the point. More sophisticated algorithms can exploit other information about the scene that is known a priori, for example symmetries, in some cases allowing reconstructions of 3D coordinates from only one camera position. Stereophotogrammetry is emerging as a robust non-contacting measurement technique to determine dynamic characteristics and mode shapes of non-rotating and rotating structures.

Integration

Integration is photogrammetric data with a dense range data in which scanners complement each other. Photogrammetry is more accurate in the x and y direction while range data are generally more accurate in the z direction. This range data can be supplied by techniques like LiDAR, laser scanners (using time of flight, triangulation or interferometry), white-light digitizers and any other technique that scans an area and returns x, y, z coordinates for multiple discrete points (commonly called “point clouds“). Photos can clearly define the edges of buildings when the point cloud footprint can not. It is beneficial to incorporate the advantages of both systems and integrate them to create a better product. A 3D visualization can be created by georeferencing the aerial photos and LiDAR data in the same reference frame, orthorectifying the aerial photos, and then draping the orthorectified images on top of the LiDAR grid. It is also possible to create digital terrain models and thus 3D visualisations using pairs (or multiples) of aerial photographs or satellite. Techniques such as adaptive least squares stereo matching are then used to produce a dense array of correspondences which are transformed through a camera model to produce a dense array of x, y, z data which can be used to produce digital terrain model and orthoimage products. Systems which use these techniques, e.g. the ITG system, were developed in the 1980s and 1990s but have since been supplanted by LiDAR and radar-based approaches, although these techniques may still be useful in deriving elevation models from old aerial photographs or satellite images.

Application

Photogrammetry is used in fields such as topographic mapping, architecture, engineering, manufacturing, quality control, police investigation, cultural heritage, and geology. Archaeologists use it to quickly produce plans of large or complex sites, and meteorologists use it to determine the wind speed of tornados when objective weather data cannot be obtained.

It can be used to combine live action with computer-generated imagery in movies post-production; The Matrix is a good example of the use of photogrammetry in film. Photogrammetry was used extensively to create photorealistic environmental assets for video games including The Vanishing of Ethan Carter as well as EA DICE‘s Star Wars Battlefront. The main character of the game Hellblade: Senua’s Sacrifice was derived from photogrammetric motion-capture models taken of actress Melina Juergens.

Photogrammetry is also commonly employed in collision engineering, especially with automobiles. When litigation for accidents occurs and engineers need to determine the exact deformation present in the vehicle, it is common for several years to have passed and the only evidence that remains is accident scene photographs taken by the police. Photogrammetry is used to determine how much the car in question was deformed, which relates to the amount of energy required to produce that deformation. The energy can then be used to determine important information about the crash (such as the velocity at time of impact).

Photogrammetry Software List

Photogrammetry is an ingenious technique for 3D scanning. You can capture large objects, like buildings or even mountains, that would be impossible to scan using other methods. Moreover, photogrammetry is also affordable since you probably already own the most important piece of equipment: Your smartphone camera. All that’s left is photogrammetry software to create a 3D file of the object you have photographed.

OPEN SOURCE SOFTWARE:

- COLMAP

- Meshroom

- MicMac

- Multi-View Environment

- Open MVG

- Regard 3D

- VisualSFM

- 3DF Zephyr (limited version free)

PAY TO USE SOFTWARE

- Autodesk ReCap ( from $40/month )

- Agisoft Metashape ( from $179 )

- Bentley ContextCapture ( on request )

- Correlator 3D ( from $250/month )

- DatuSurvey ( from $350/month )

- Elcovision 10 ( on request )

- iWitness Pro ( $2,495 )

- IMAGINE Photogrammetry ( on request )

- LiMapper (on request )

- Photomodeler ( one time fee of $995 or from $49/month )

- Pix4D ( one time fee of €3,990 or €260/month or €217/month if paid annually )

- RealityCapture ( from $99/for 3 months )

- SOCET GXP ( on request )

- Trimble Inpho ( onrequest )

- WebODM ( from $57 )

Drone Photogrammetry

Drone Photogrammetry requires enough photos taken from the optimal height to get you the best ground sample distance. In fact, you need an 80% overlap on each picture. This is necessary for two reasons:

- For the computer to stitch images together to make the orthophoto (2D aerial image that’s been corrected for distortion).

- To capture enough angles to create a digital terrain model (the shape of your surface).

When combined, the orthophoto and DTM create the 3D model of your site.

We can’t overstate the importance of steady, consistent flight in getting these photos right for drone photogrammetry. The best way to achieve that is with a flight planning app.

3D Scanning VS Photogrammetry

Digital 3D models are used for many different purposes in a variety of industries, and there are multiple methods of making them from a real object. The two primary methods for digital 3D modeling are 3D scanning and photogrammetry.

3D Scanning

When comparing 3D scanning to photogrammetry, two varieties are most common – laser and white light 3D scanning. Laser scanning uses a laser to measure an object’s geometry and create the model through the data obtained. The laser beam is swept across the surface, and the device uses angle encoders of the beam projector and the return “time-of-flight” to calculate the location of each point in 3D space. Once all of the points are captured and recorded, a dense point cloud results. To capture a complete object, the laser scanner or object is moved, and the scan repeated.

White light 3D scanning utilizes a projector (often LCD) and one or more cameras to map an area or object. Light patterns are projected onto the surface, and the camera then records the surface by measuring where and how the light deforms around it. To ensure every angle is captured, the scanner is moved around the object, or the object is moved in front of the scanner. The result is a point cloud similar to what laser 3D scanning produces, with the same option of producing a polygonal mesh.

Pros and Cons 3D Scanning

3D scanners have several advantages, primarily, their high accuracy and high resolution. This method performs well for smaller parts and can create data points in real-time. This can save time, as you’ll see what areas need to be rescanned or have been missed before taking it into the design phase.

However, light interference can produce unfavorable 3D scans. Both laser and white light scanners read light sources to collect the data. If there’s too much ambient light the collected data may be distorted. Because of this, 3D scanning is best used where the lighting can be controlled. Similarly, 3D scanners also have trouble with shiny or reflective surfaces. These surfaces tend to send the light away from the input sensors making getting a quality scan difficult. One of the biggest disadvantages of 3D scanners is the price. The machinery can cost tens of thousands of dollars. As technology advances, you’ll need to purchase new scanners to keep up. Another disadvantage is the size and transportability of the device.

Photogrammetry

Photogrammetry is another method used to create 3D models. Instead of using active light sources, this technology uses photographs to gather data. To create a 3D model using photogrammetry, photos are taken from a variety of angles to capture every part of the subject with overlap from picture to picture. This overlap is necessary for the software to align the photos appropriately. Once all of the images are taken, they’ll be imported into the photogrammetry software, which aligns the pictures, plot data points, and calculates the distance and location of each point in the 3D space. The result is a 3D point cloud that can create a polygonal mesh, just like 3D scanning.

There are three methods of using photogrammetry: manual, target, and dense matching.

- Manual: The manual method of photogrammetry is the slowest and generates a low point count. Though it’s not the best for every task, it does allow you to capture exactly what you want. Here the operator identifies like-points across photos.

- Targets: The target method is automated and much faster, producing a higher point count. There is a bit more set up time involved, but it is incredibly accurate.

- Dense Matching: The dense matching method is the most similar to 3Dscanning, generating dense point clouds that can be used in a variety of applications.

The main advantage of using photogrammetry over 3D scanning is price and accessibility. A camera and photogrammetry software are usually less expensive, and much easier to transport!

Another important advantage of photogrammetry is its ability to reproduce an object in full color and texture. Though some 3D scanners can produce this as well, photogrammetry is the method to use when you’re looking for realism.

Lastly, an interesting advantage is that photogrammetry can work at many scales and sizes. You can model the tip of a finger or a whole mountain range. Other 3D scanners are limited to a particular sized object.

Photogrammetry has its disadvantages. When using the scanning method texture plays a big part in how the reference points are made, working with smooth, flat, or solid-colored surfaces can be difficult. Photogrammetry projects can sometimes involve more in-office processing time as well.

To conclude the comparison:

3D photogrammetry and laser scanning are both efficient technologies, but with applications in different cases. Overall, when choosing between photogrammetry and 3D scanning, you really have to consider your needs and applications.

Photogrammetry Tutorials

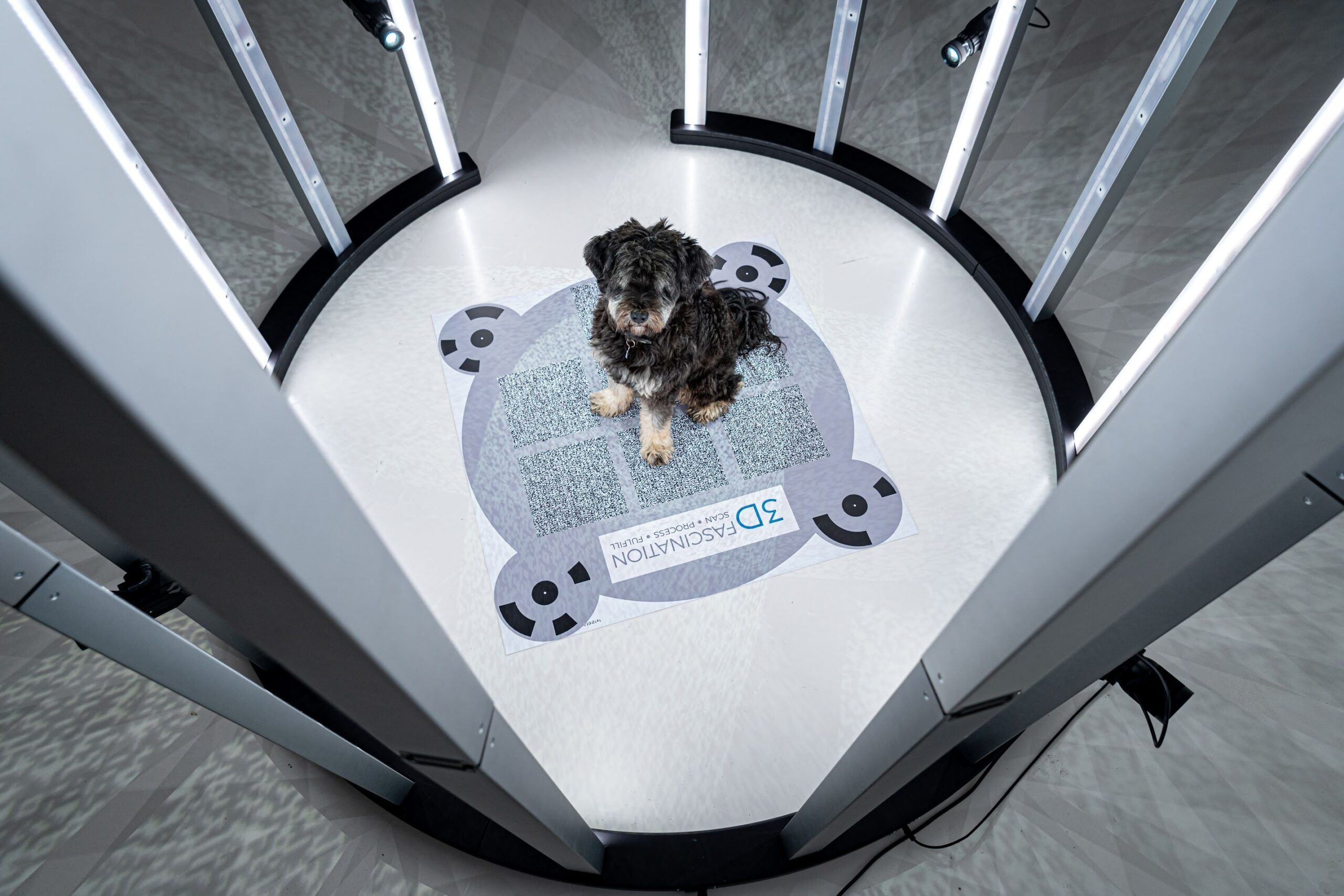

3DFascination provides a turnkey solution for engaging with your customers and monetizing 3D attractions.

We offer reliable and portable 3D body scanners, fulfillment process for 3D figurines, and professional 3D services making it easy for you to capture new business opportunities with the fascinating power of 3D.

Learn more about our 3D Scanner